Digitalizing intuition

“From the brain straight to the hand and from the hand straight to the brain” is something just about everyone who studied a design subject will have heard at some point during their training. The general assumption is that you tackle problems, designs and ideas in a quite different way if you address them in an analog medium, i.e., jot them down or build a model. The fact is that this direct and often intuitive realization of ideas has a considerable influence on the design.

The advent of digital tools such as CAD and 3D modelling programs in the world of design means that working by hand often takes a back seat. As the computer woos us with the often erroneous but seductive promise that it’ll now be easier to find the right form for things. And thanks to 3D printers it’s now indeed simpler to build such digital models. But as a rule the interface between brain and CPU, with the hand-on-mouse often acting as the intermediary, puts a drag on intuition, insists that the idea be structured to conform with the relevant program. And it calls for a degree of precision that is simply not necessary at such an early phase of bouncing ideas around and experimentation, and in fact often hinders this. New developments that combine analog and digital design strategies indicate the future may be rosier.

A holodeck for the designers?

Sci-fi films have showed us how it’s done: Be it “Matrix”, “Tron” or “Star-Trek”, the melding of body and virtual space definitely has interesting potential precisely for the construction industry. Touring the digital model of your own design may prompt you to change it here and there. And you can use it to potentially convince a client or developer better of how high-grade the idea is – or so the marketing buffs at Zeiss claim, who have teamed up with Graphisoft to develop a new “3D Virtual Reality Glasses” called “cinemizer® OLED”. These data-specs enable you to tour a digital building, for example. But the Zeiss specs seem just that bit wooden compared to kick-start success of “Oculus Rift”.

Unlike “cinemizer® OLED”, the Oculus Rift “Augmented Reality” specs boast swift and sensitive sensors so that you can navigate in virtual space by simple movements of the head. The focus is firmly on the experience, as the specs are intended for the world of computer games. The logic of virtual gaming and construction are pretty similar, though, and it is only a matter of time before this trend from gamin spills over into the construction industry and gets adopted.

The tour of the virtual world remains a purely virtual experience to date, meaning it can’t yet be compared to the idea of the holodeck. As there you can get your hands on the virtual world, feel it, interact with it. Current data spectacles don’t let you open doors or shift walls. Meaning that at present they’re limited as a design tool. But it’s only a matter of time.

Hey Presto – as if by magic

Imagine how, in the future, as if one were a conductor, you gesticulated the shape of a building or an object in the air, and these gestures were then directly transformed into a digital 3D model. That pretty much describes the experiment of “Gestural [AR]chitecture”, a project Ryan Luke Johns is running at Princeton University. Here, using a Smartphone, a Kinect and an array of sensors and computers shapes are inputted using hand and arm movements. The Smartphone’s voice recognition system also enables you to set the materials for the building thus designed.

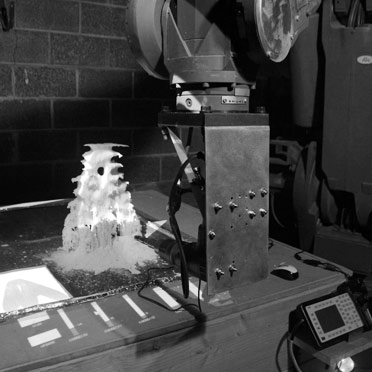

On Johns’ “Augmented Materiality” and “Interactive Milling” projects, the experimentation is with direct, physical intervention in the manufacturing process. Both projects combine augmented reality-technologies with real-time computer simulations, sensory feedback and robot manufacturing tools. Using a tablet with an AR software to manage the manufacturing tool, the designer or architect can intervene directly in the manufacturing process: Simple finger movements suffice to control the robot’s actions, spontaneous changes can be realized at the flick of a wrist – with a revised CAD file having to be produced. Instead it is as if you were transferring your ideas direct from your brain to your hand to the tool and to the model. And the product gest adjusted accordingly. The architect or designer becomes a magician who transposes his ideas by gesture into the digital world.

Interactive model building

Virtual collaboration is already pretty easy using Cloud-based solutions and video conferences. But working together at a digital model only functions to a limited extent, if at all. After all, working together at a physical model isn’t always a cinch. Often debate only water down a design, irrespective of whether we’re talking architecture, urban design or design pure. The detour via language often makes things harder, too: Everyone uses terms and adjectives in different ways. How much easier and vivid it would be if one could simply intervene in the digital model, directly and intuitively!

Daniel Leithinger and Sean Follmer, scientists at the “Tangible Media Group” attached to the “MIT Media Lab” are researching the difficulties in such interaction. Their “inform” project is a display the shape of which can be changed across all three dimensions and which is somehow reminiscent of the “Pinpressions” décor idea. To use the system, you don’t need to be close to the display. A camera transfers your movements and thus controls the individual pixels in the display. Meaning that depending on what you want the 3D shape changes, and you can touch and feel it. The pixels are still pretty grainy, more reminiscent of Lego blocks than precise architectural renderings. But the speed at which these technologies are advancing i5t is quite conceivable that we will soon have a scenario where people in different locations can discuss a project and all those involved can intervene directly in the model and create new shapes in a matter of seconds.

Interfaces, as all the above examples illustrate, is the crux when it comes to the technology of designing with digital tools. The iphone and ipad have made it clear that simple use of the keys – is the key to “merging” with technology. The computer gaming segment is an important driver here, as it has the requisite capital to invest, inventive minds, and a huge sales market. An example of such an advance is the Kinect hardware. What initially conquered the gaming market is now foraying into such sectors as medical technology. Enabling operating theater equipment to be used without having to touch it.

If input is intuitively made by gesture and you can then interact to process the models thus generated, designing could become a digitally extended form of working by hand. The benefit: the intuitive approach saves time. Perhaps it will help us get closer to constructing what we had in mind but can only realize to a limited extent using today’s programs.